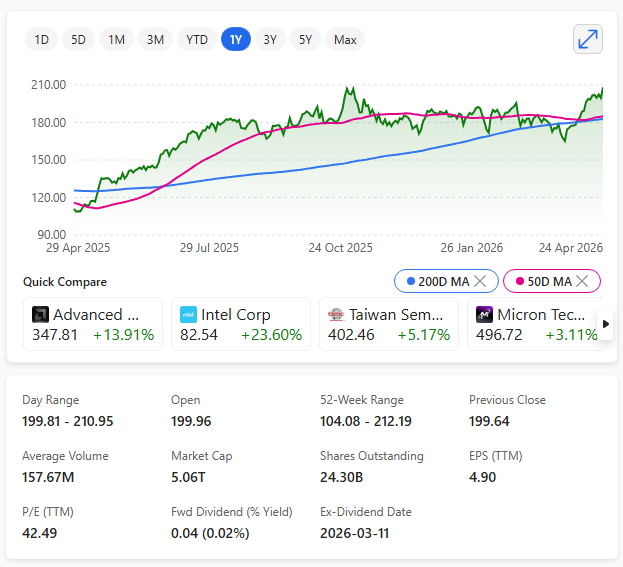

Nvidia has become the first company in history to reach a $5 trillion market capitalisation, driven by an extraordinary surge in global AI demand.

Nvidia’s stock jumped nearly 5% in a single session, lifting its valuation above the $5 trillion threshold and cementing its position as the world’s most valuable company by a wide margin.

Shares traded around $208–$209, briefly touching valuations as high as $5.12 trillion.

Game cards to major AI player

The milestone reflects Nvidia’s transformation from a gaming‑focused chipmaker into the backbone of the modern AI economy.

Demand for its advanced GPUs—particularly the Blackwell and B300 series—continues to outpace supply as data‑centre operators, cloud giants, and governments race to expand AI infrastructure.

This surge has pushed Nvidia’s revenue to more than $215.9 billion, with profits exceeding $120 billion, among the highest in the semiconductor industry.

Rally

The broader semiconductor sector has rallied alongside Nvidia, with Intel and AMD both posting double‑digit gains on strong earnings and renewed investor confidence.

Yet Nvidia remains the clear leader, commanding the majority of the data‑centre GPU market and benefiting from long‑term visibility as hyperscalers commit to multi‑year AI spending.

While the achievement underscores Nvidia’s dominance, analysts note that expectations are now exceptionally high.

Sustaining this momentum will depend on continued AI investment, stable macroeconomic conditions, and the company’s ability to stay ahead of rising competition and geopolitical constraints.