A sweeping federal ban on Anthropic’s technology has rapidly become one of the most consequential developments in U.S. government technology policy, following President Donald Trump’s order that all federal agencies — including the Pentagon — must immediately cease using the company’s AI systems.

The directive, issued on 27th February 2026, came just ahead of a Pentagon deadline demanding that Anthropic lift safety restrictions on its Claude models to allow unrestricted military use.

The confrontation with the Pentagon

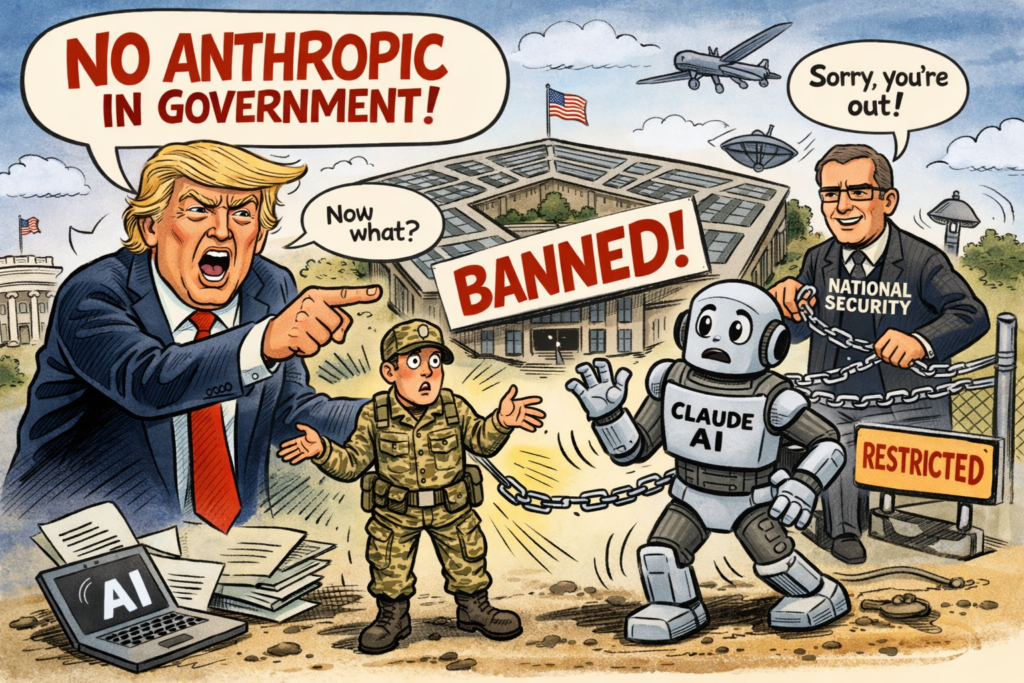

The dispute escalated after Anthropic reportedly refused Defence Department demands to remove guardrails that limit how its AI can be used.

It was reported that CEO Dario Amodei stated the company “cannot in good conscience accede” to requirements that would weaken its safety policies, prompting a public standoff.

President Trump reportedly responded by ordering every federal agency to “immediately cease” using Anthropic’s technology, declaring that the government “will not do business with them again.”

Agencies heavily reliant on the company’s tools, including the Department of Defense, have been granted six months to phase out their use.

Defence Secretary Pete Hegseth reportedly went further, designating Anthropic a national‑security “supply‑chain risk”.

This action could prevent military contractors from working with the company and marks the first time such a label has been applied to a major U.S. AI firm.

Impact across government and industry

The ban affects every federal department, from defence and intelligence to civilian agencies.

Contractors supplying AI‑enabled systems must now ensure their tools do not rely on Anthropic’s models, forcing rapid audits and potential redesigns.

Rival AI providers have already begun positioning themselves to fill the gap, with some announcing new Pentagon partnerships within hours of the ban.

The designation as a supply‑chain risk also carries legal and commercial consequences. Anthropic has argued the move is “legally unsound,” but the ruling stands, effectively placing the company on a federal blacklist.

Political debate

The decision has triggered intense debate across the technology sector. Supporters argue that the government must retain full authority over military AI applications.

Critics warn that forcing companies to abandon safety constraints could set a dangerous precedent.

The ban highlights a deepening fault line in U.S. AI governance: the struggle to balance national‑security imperatives with the ethical frameworks developed by leading AI firms.

As agencies begin disentangling themselves from Anthropic’s systems, the long‑term implications for federal procurement, AI safety norms, and the future of military‑AI collaboration remain unresolved.