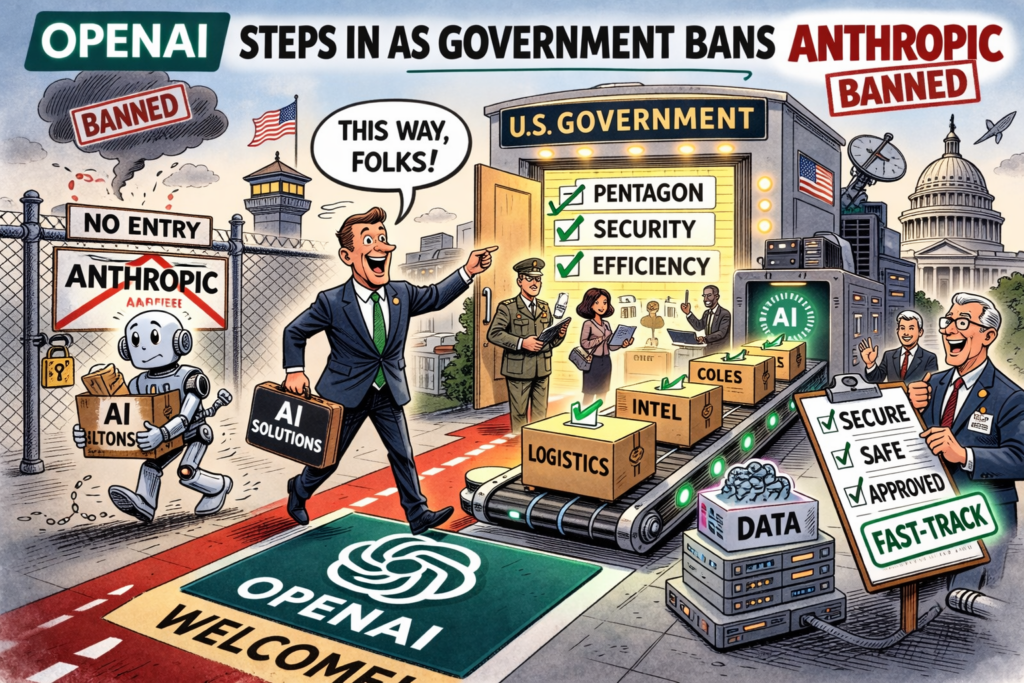

Following the abrupt federal ban on Anthropic’s Claude models, OpenAI has moved quickly to position itself as the primary replacement across U.S. government departments.

With Claude now designated a supply‑chain risk, agencies are likely scrambling to reconfigure AI workflows — and OpenAI’s systems appear to be emerging as the default alternative.

Integration

The company’s flagship GPT‑4.5 and its agentic development tools have reportedly already been integrated into several defence and civilian systems, according to some observers.

OpenAI’s reported longstanding compatibility with government‑approved platforms, including Azure and OpenRouter, has smoothed the transition. Unlike Anthropic, OpenAI has historically offered more flexible deployment options.

Industry analysts note that OpenAI’s recent hires — including agentic systems pioneer Peter Steinberger (OpenClaw) — signal a deeper push into autonomous task execution, a capability highly prized by defence and intelligence agencies.

The company’s agent frameworks are being trialled for logistics, simulation, and multilingual analysis, with early results described as “mission‑ready.”

Friction

However, the shift is not without friction. It has been reported that some federal teams have built Claude‑specific workflows, particularly in legal, policy, and ethics‑driven domains where Anthropic’s safety constraints were seen as a feature, not a limitation.

Replacing those systems with GPT‑based models requires careful recalibration to avoid unintended consequences.

OpenAI’s rise also raises broader questions about vendor concentration. With Anthropic sidelined and Google’s Gemini models still undergoing federal evaluation – OpenAI now dominates the landscape — a position that may invite scrutiny from oversight bodies concerned about resilience and competition.

Still, for now, OpenAI appears to be the primary beneficiary of the Claude ban. In the vacuum left by Anthropic, OpenAI will be attempting to fill the space.

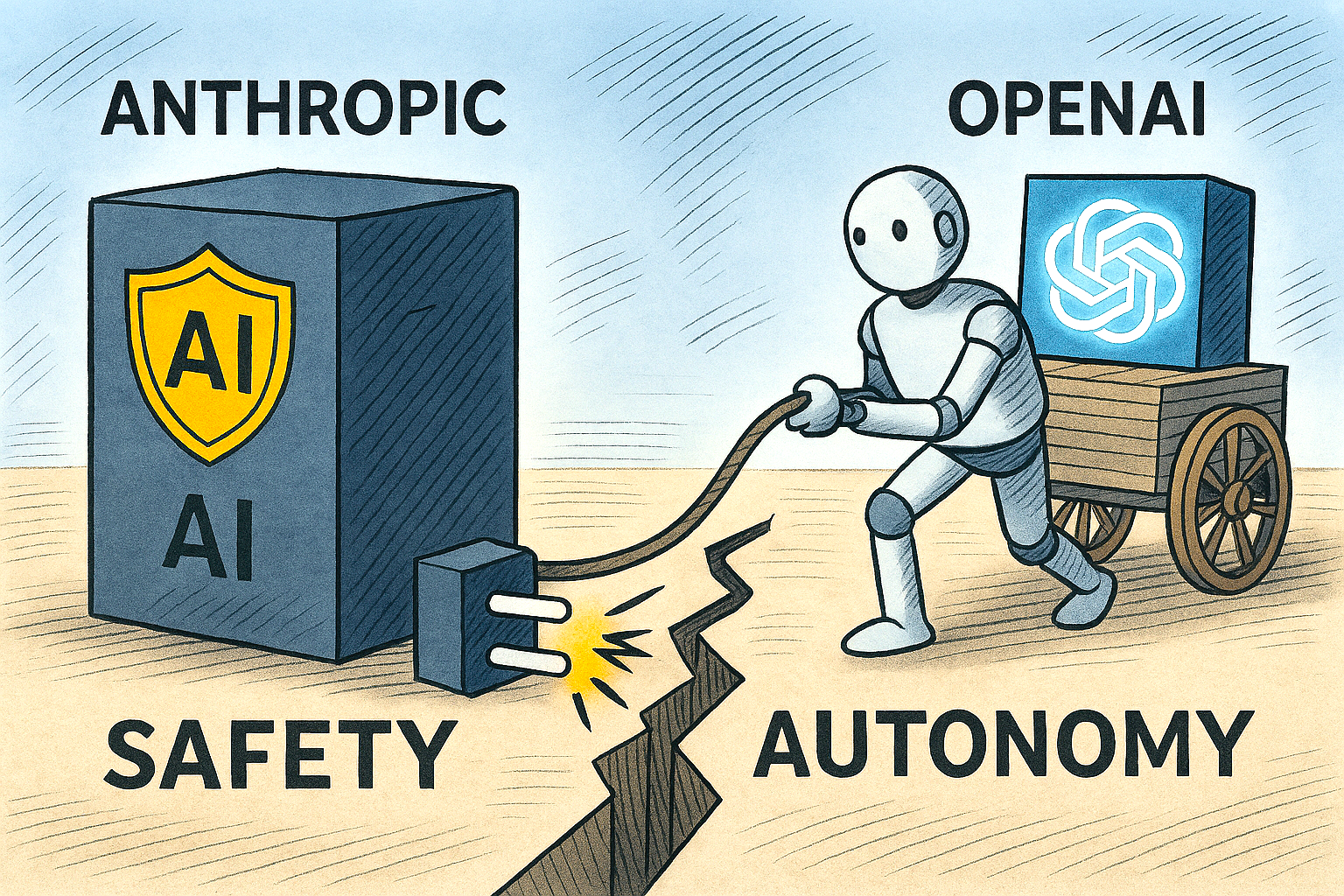

OpenAI vs Anthropic: Safety vs Autonomy in Federal AI

OpenAI’s agentic tools are likely filling the vacuum left by Anthropic’s ban, offering flexible deployment and autonomous task execution prized by defence and intelligence agencies.

While Claude prioritised safety constraints and ethical guardrails, OpenAI’s GPT‑based systems should offer broader operational freedom.

This shift reflects a deeper philosophical divide: Anthropic’s models were designed to resist misuse, while OpenAI’s are engineered for adaptability and control.

As federal agencies recalibrate, the tension between safety‑first design and unrestricted autonomy is becoming the defining fault line in U.S. government AI strategy.

How long will it be before Anthropic is invited back to the table?