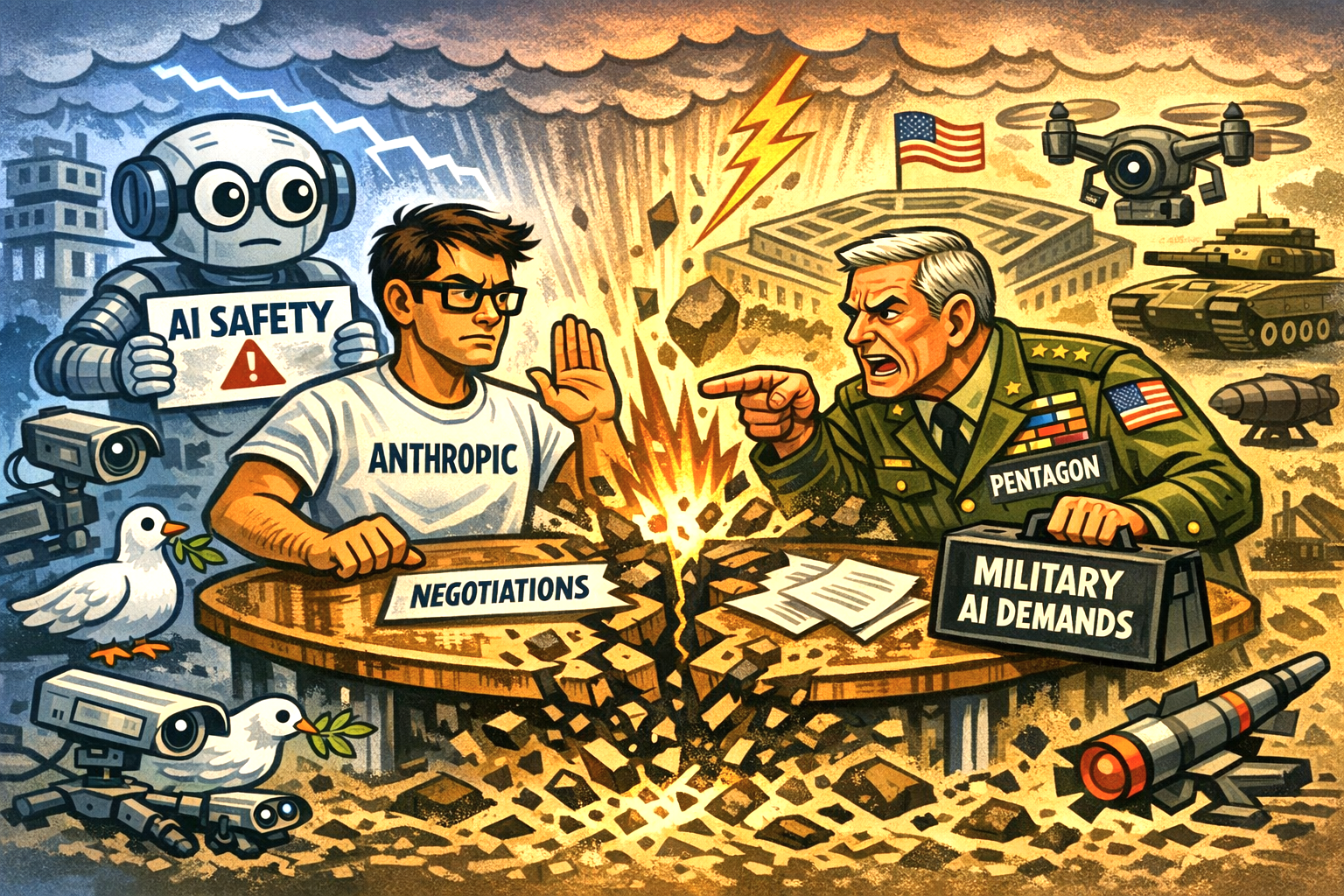

The Pentagon’s Chief Technology Officer, Emil Michael, has apparently ignited a fresh debate over the role of commercial artificial intelligence in national security, arguing that Anthropic’s Claude models could “pollute” the U.S. defence supply chain.

I notice his comments came in an interview with CNBC, offer the clearest rationale yet for the Department of Defense’s decision to designate Anthropic as a supply chain risk — an extraordinary step previously reserved for foreign adversaries.

It seems the opinion is that Claude’s “policy preferences”, embedded through Anthropic’s constitutional training approach, create an unacceptable misalignment with the Pentagon’s operational needs.

Risk

It was reported that any AI system whose underlying values diverge from defence priorities risks producing ineffective outputs, whether in decision‑support tools, equipment design, or battlefield logistics.

“We can’t have a company that has a different policy preference baked into the model… pollute the supply chain so our warfighters are getting ineffective weapons [and] ineffective protection,” he was reported to have said.

Anthropic has responded forcefully, suing the Trump administration and calling the designation “unprecedented and unlawful”.

The company argues that the move jeopardises hundreds of millions of dollars in contracts and mischaracterises the nature of its technology.

Claude in the ecosystem?

It also notes that Claude continues to be used within parts of the U.S. military ecosystem, including by major defence contractors such as Palantir, underscoring the practical difficulty of an immediate transition away from its models.

Michael insists the decision is not punitive and emphasises that only a small fraction of Anthropic’s business comes from government work.

Nonetheless, the designation forces contractors to certify they are not using Claude in Pentagon‑related projects, setting up a potentially lengthy and politically charged dispute over how value‑aligned AI must be before it is allowed anywhere near defence infrastructure.

The episode highlights a broader tension: as AI systems become more opinionated by design, governments are increasingly asking whether “alignment” is a technical question — or a geopolitical one.