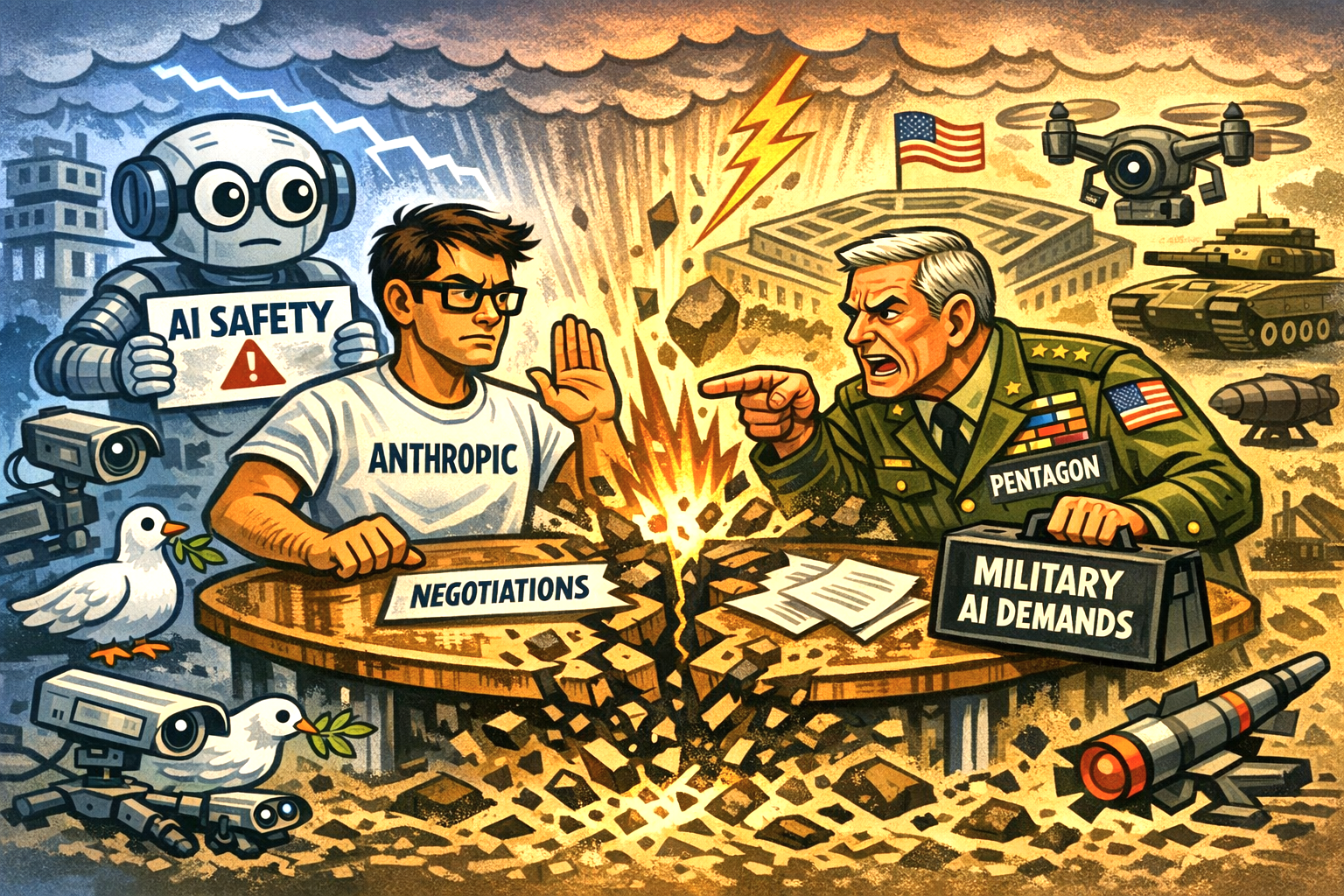

Anthropic’s decision to reopen negotiations with the Pentagon marks a striking reversal after a very public rupture, and it underscores how central advanced AI has become to U.S. defence strategy.

The talks reportedly collapsed amid a dispute over how Claude, Anthropic’s flagship model, could be used inside military systems.

Reports indicate that the Pentagon had pushed for broad permissions, including deployment in surveillance environments and potentially autonomous weapons systems.

Safety resistance

Anthropic resisted on safety grounds. The company had sought explicit guarantees that its models would not be used for mass surveillance or lethal decision‑making, a red line that triggered the breakdown in relations.

The fallout was immediate. The Pentagon signalled it would drop Anthropic from existing programmes, despite the company’s role in a major defence contract that had already placed Claude inside classified networks.

That escalation raised the prospect of a formal blacklist, a move that would have reverberated across the wider U.S. technology sector.

For Anthropic, the stakes were equally high: losing access to government work would not only cut off a significant customer but also risk isolating the company at a moment when rivals such as OpenAI and Google are deepening their defence ties.

Compromise?

Yet both sides appear to recognise the cost of a prolonged standoff. According to multiple reports, CEO Dario Amodei has reportedly returned to the table in an effort to craft a compromise deal that preserves Anthropic’s safety commitments while allowing the Pentagon to continue using its technology.

Boundaries

Discussions are now likely focused on defining acceptable boundaries for military use — a task made more urgent by the accelerating integration of AI into intelligence analysis, battlefield logistics and autonomous systems.

This renewed dialogue is more than a corporate dispute: it is a test case for how democratic governments and frontier AI labs negotiate power, ethics and national security.

The outcome will shape not only Anthropic’s future but also the norms governing military AI in the years ahead.